AISE · Independent research · Field-tested via mcp-graph-workflow

Software Engineer at Vivo / Telefónica, independent researcher in AISE — AI-driven Software Engineering. I treat engineering with agents as a research practice: hypothesis → harness → measurement → publication.

- 🔬 Independent AISE research — a one-person lab on how coding agents should actually operate in production.

- 🔍 Current focus: harness search — how the agent retrieves code, context and memory inside its own harness, without hallucinating or blowing the context window.

- 🚀 Field proof: mcp-graph-workflow — where the research turns into a usable tool. PRD → graph → TDD → PR, local-first, AGPL.

AISE (AI-driven Software Engineering) is my applied-research label: a one-person lab focused on turning "shipping with AI" from folklore into measurable discipline.

Active lines of work:

| Line | Question | Status |

|---|---|---|

| Harness Search | How does the agent find context without hallucinating or blowing the window? | Spotlight (§ below) |

| Determinism via persistent graph | Can generation entropy be reduced by anchoring the agent on a traceable PRD → graph → PR? | Shipping via mcp-graph-workflow |

| Memory & context compression | How to preserve decisions across sessions without inflating context? | Iterating |

Research notes published on the blog.

How the agent searches inside its own harness — code, context, memory, prior decisions — without hallucinating or blowing the context window.

Search inside the harness is what separates an agent that guesses from an agent that knows. It's also the silent bottleneck of most AI workflows today: the agent "forgets" not because it lacks memory, but because it doesn't know how to search the memory it has.

query → embeddings → SQLite graph → AST → ranked context → agent

↑ │

└────────────── feedback loop ────────────────┘

Five lines of investigation:

- 🧠 Local RAG over SQLite — embeddings of PRDs, tasks and decisions; semantic recall in <50 ms, zero cloud.

- 🧭 Code-aware multi-language search — graph↔code sync detects drift; agentic grep with AST awareness across 13 languages.

- 📦 Hierarchical context compression — summaries preserve decisions across sessions without replaying the raw history.

- 🧪 Retrieval-grounded TDD — before proposing implementation, the agent searches for existing tests/cases; a hook blocks the commit when it skips that step.

- 🛡️ Citation-enforced anti-hallucination (MCP-Graph v13 ·

epic-13) — new code undersrc/core/must cite the ADR or epic that motivated the decision. If it doesn't, thevalidateFilesCitationsvalidator blocks the commit. Search becomes mandatory grounding, not a nice-to-have — when the agent can't cite, that's a hallucination signal.

All of this runs inside mcp-graph-workflow — the next section is the field proof.

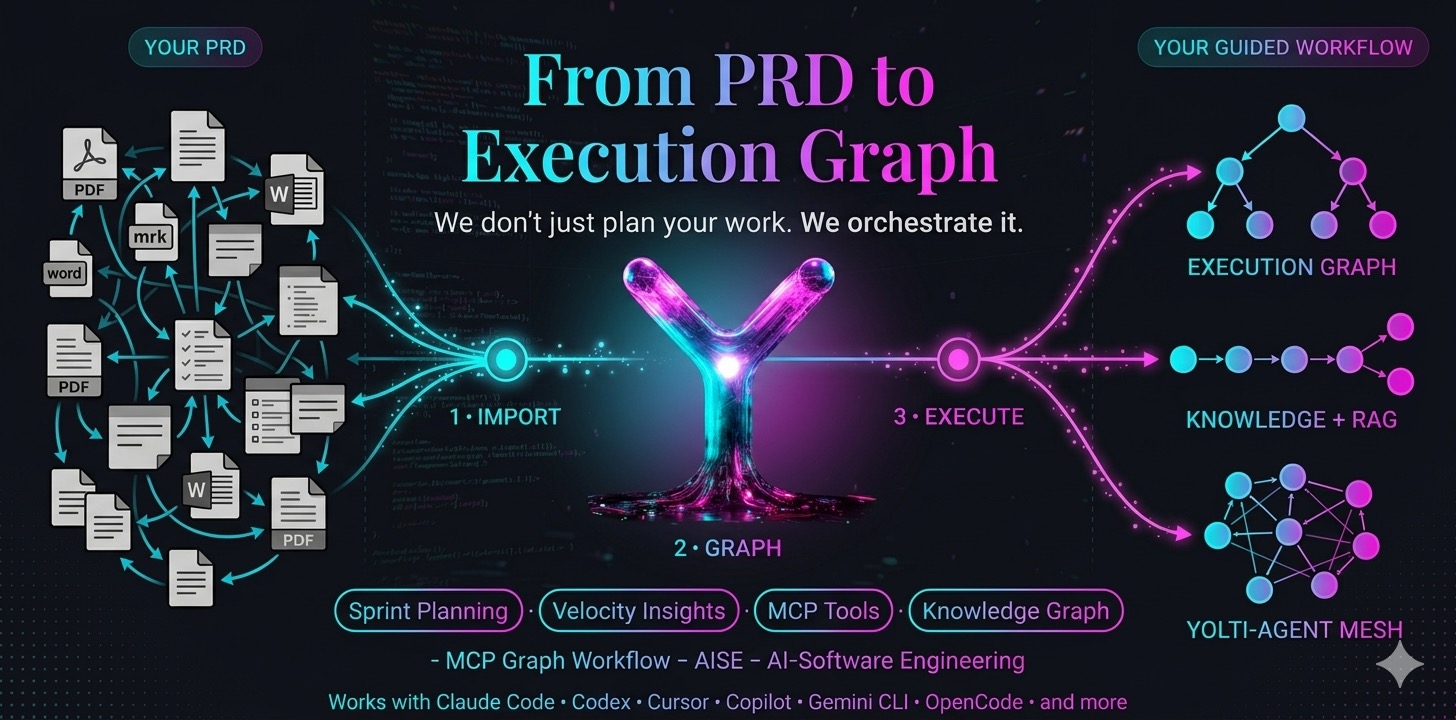

Where AISE research turns into a tool. A local-first MCP server that converts PRDs into persistent execution graphs on SQLite, with embedded RAG and TDD hooks. No cloud, no LLM key, no improvisation.

From PRD to Execution Graph — we don't just plan your work, we orchestrate it.

npm install -g @mcp-graph-workflow/mcp-graph

When an AI agent writes new code under

src/core/, it is required to cite which ADR or epic motivated the decision. If it can't, that's a hallucination signal — implementation with no spec to back it up. ThevalidateFilesCitationsvalidator flags new files undersrc/core/without citations as a violation and blocks the commit.

search→grounding→citation→validation— the loop closes. Search stops being a convenience and becomes a precondition for writing code.Shipping since v13 · tag

epic-13· validator:validateFilesCitations.

9-phase cycle:

ANALYZE → DESIGN → PLAN → IMPLEMENT → VALIDATE → REVIEW → HANDOFF → DEPLOY → LISTENING

Key capabilities:

- 🛡️ Anti-hallucination via citation enforcement (v13) —

validateFilesCitationsrequires an ADR/epic on every new file undersrc/core/; no citation, no commit. - ⚡ Pipeline tools that cut MCP calls by an order of magnitude (

start_task+finish_task). - 🤖 Agent State Machine: every response signals the next action to the agent.

- 📊 Built-in DORA metrics (deployment frequency, lead time, MTTR).

- 🧠 Cross-project learning: import knowledge across projects.

- 🔍 Code-aware sync detects graph↔code drift across 13 languages.

- 🧩 Smart decompose splits tasks by acceptance criterion.

Differentiation:

- vs Cursor / Copilot alone → persistence + governance across sessions.

- vs Linear / Jira → graph executable by the agent, not just a visual board.

- vs LangGraph & friends → local-first, zero infra, single CLI.

Productivity and rework-reduction numbers are internal measurements over end-to-end PRD→PR flows. Methodology detailed on the blog.

Compatibility:

| Client | How it connects | Status in repo |

|---|---|---|

| Claude Code | .mcp.json + .claude/ (hooks, rules, skills) |

✅ Pre-configured |

| GitHub Copilot (VS Code) | .vscode/mcp.json |

✅ Pre-configured |

| Gemini CLI | .gemini/settings.json |

✅ Pre-configured |

| Codex · Cursor · OpenCode · IntelliJ · Windsurf · Zed | same .mcp.json snippet |

- ♟️ xadrez-3D — 3D side project, an exercise in physics and UX.

- 📝 diegonogueira.blog — research notes on AISE, MCP and discipline with agents.

I work in TypeScript / Node on local SQLite, with Vitest + Playwright as the test harness, MCP as the tools protocol, and Claude as the primary agent model.

AISE — research first, ship second, hype never.