diff --git a/.gitignore b/.gitignore

new file mode 100644

index 0000000..02ea1d8

--- /dev/null

+++ b/.gitignore

@@ -0,0 +1,5 @@

+datasets/*

+!datasets/.gitkeep

+.DS_Store

+.__pycache__/

+*.egg-info/

\ No newline at end of file

diff --git a/convolutional_ar.egg-info/PKG-INFO b/convolutional_ar.egg-info/PKG-INFO

new file mode 100644

index 0000000..dfaac58

--- /dev/null

+++ b/convolutional_ar.egg-info/PKG-INFO

@@ -0,0 +1,105 @@

+Metadata-Version: 2.4

+Name: convolutional_ar

+Version: 0.0.1.dev0

+Summary: Convolutional autoregressive models.

+Home-page: https://github.com/voytekresearch/convolutional_ar

+Download-URL: https://github.com/voytekresearch/convolutional_ar/releases

+Author: The Voytek Lab

+Author-email: voyteklab@gmail.com

+Maintainer: Ryan Hammonds

+Maintainer-email: rphammonds@ucsd.edu

+License: Apache License, 2.0

+Keywords: convolutional,autoregressive,image,texture

+Platform: any

+Classifier: Development Status :: 5 - Production/Stable

+Classifier: Intended Audience :: Science/Research

+Classifier: Topic :: Scientific/Engineering

+Classifier: License :: OSI Approved :: Apache Software License

+Classifier: Operating System :: Microsoft :: Windows

+Classifier: Operating System :: MacOS

+Classifier: Operating System :: POSIX

+Classifier: Operating System :: Unix

+Classifier: Programming Language :: Python

+Classifier: Programming Language :: Python :: 3.7

+Classifier: Programming Language :: Python :: 3.8

+Classifier: Programming Language :: Python :: 3.9

+Classifier: Programming Language :: Python :: 3.10

+Classifier: Programming Language :: Python :: 3.11

+Requires-Python: >=3.6

+Requires-Dist: numba==0.58.1

+Requires-Dist: numpy==1.26.2

+Requires-Dist: matplotlib==3.8.2

+Requires-Dist: scikit-learn==1.3.2

+Requires-Dist: scipy==1.11.4

+Requires-Dist: spectrum==0.8.1

+Requires-Dist: torch==2.1.2

+Requires-Dist: torchvision==0.16.2

+Provides-Extra: tests

+Requires-Dist: pytest; extra == "tests"

+Requires-Dist: pytest-cov; extra == "tests"

+Provides-Extra: all

+Requires-Dist: pytest; extra == "all"

+Requires-Dist: pytest-cov; extra == "all"

+Dynamic: author

+Dynamic: author-email

+Dynamic: classifier

+Dynamic: description

+Dynamic: download-url

+Dynamic: home-page

+Dynamic: keywords

+Dynamic: license

+Dynamic: maintainer

+Dynamic: maintainer-email

+Dynamic: platform

+Dynamic: provides-extra

+Dynamic: requires-dist

+Dynamic: requires-python

+Dynamic: summary

+

+# Autoregressive Models for Texture Images

+

+# Convolutional Autoregressive Model

+

+ +

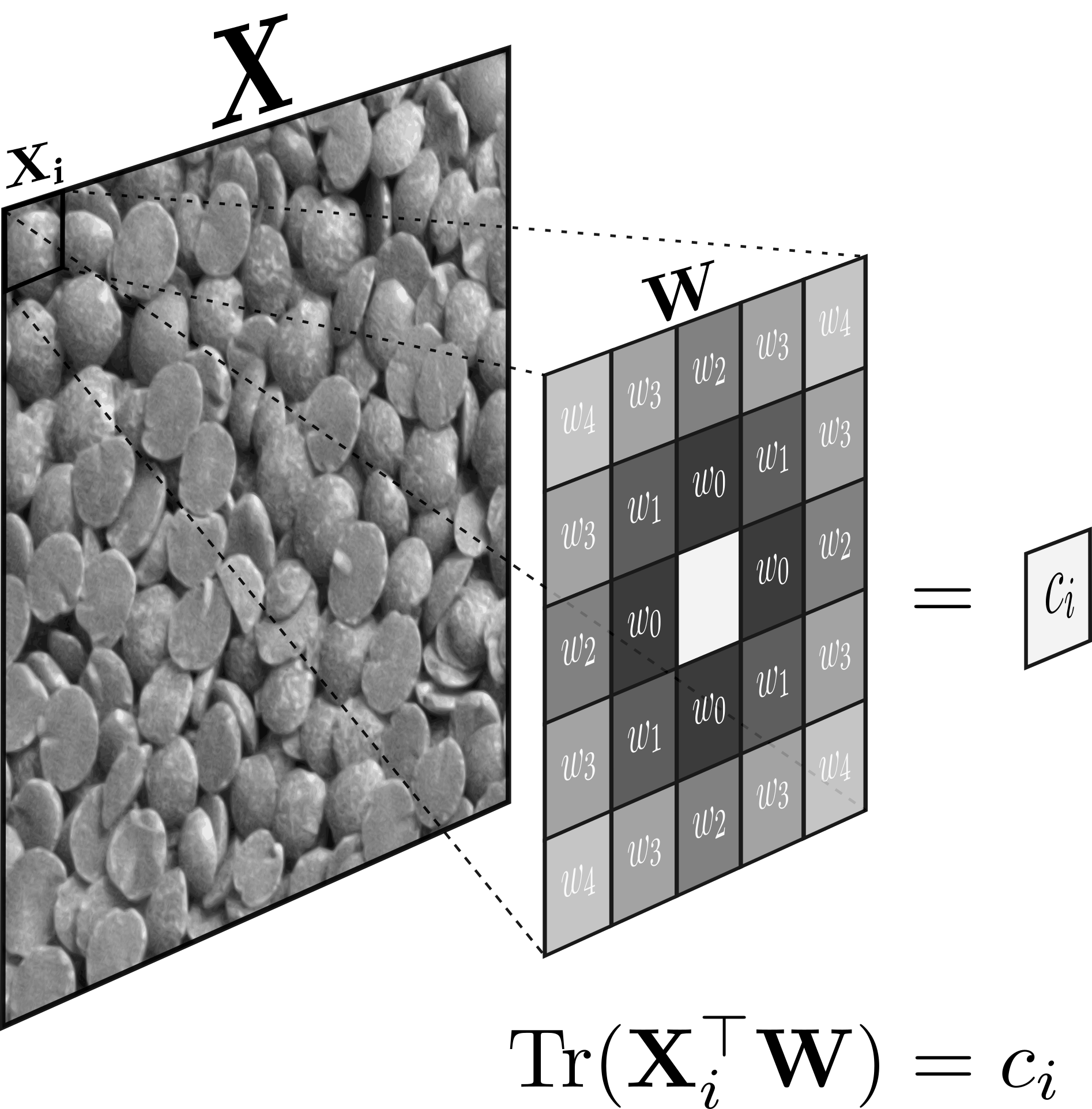

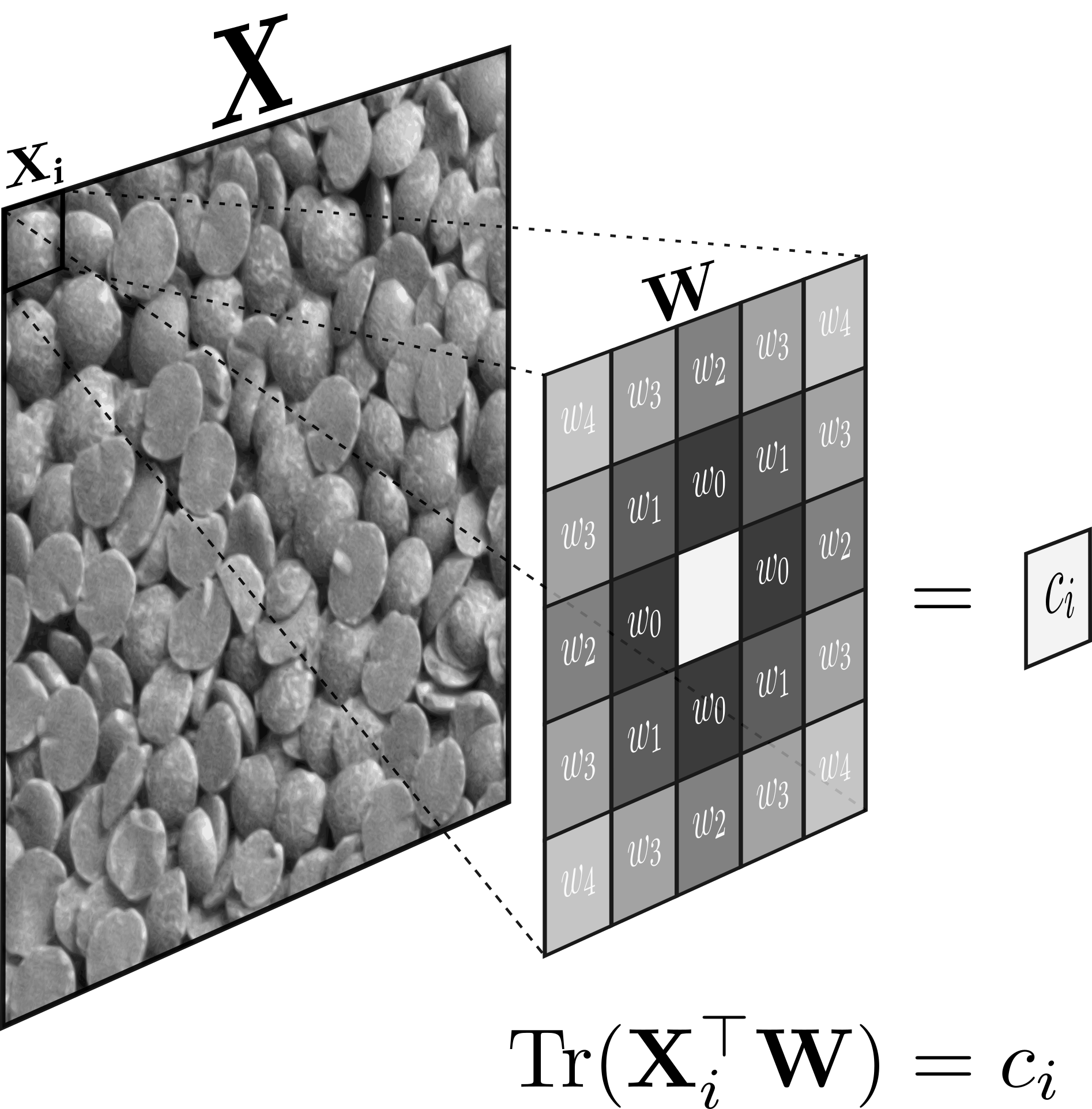

+The weights of a convolution kernel are optimized to best predict the center pixel of each window, $\mathbf{X}_i$. The weights of the kernel are constrained based on distance from the center, e.g. the first three weights, $\{w_0, w_1, w_2\}$, correspond to indices in the kernel with distances $\{1, \sqrt{2}, 2\}$ from the center pixel. Convolution is the Frobenius inner product between the image and kernel, optimized to best predict the center pixel, $c_i \in \mathbf{X}$.

+

+

+

+The weights of a convolution kernel are optimized to best predict the center pixel of each window, $\mathbf{X}_i$. The weights of the kernel are constrained based on distance from the center, e.g. the first three weights, $\{w_0, w_1, w_2\}$, correspond to indices in the kernel with distances $\{1, \sqrt{2}, 2\}$ from the center pixel. Convolution is the Frobenius inner product between the image and kernel, optimized to best predict the center pixel, $c_i \in \mathbf{X}$.

+

+ +

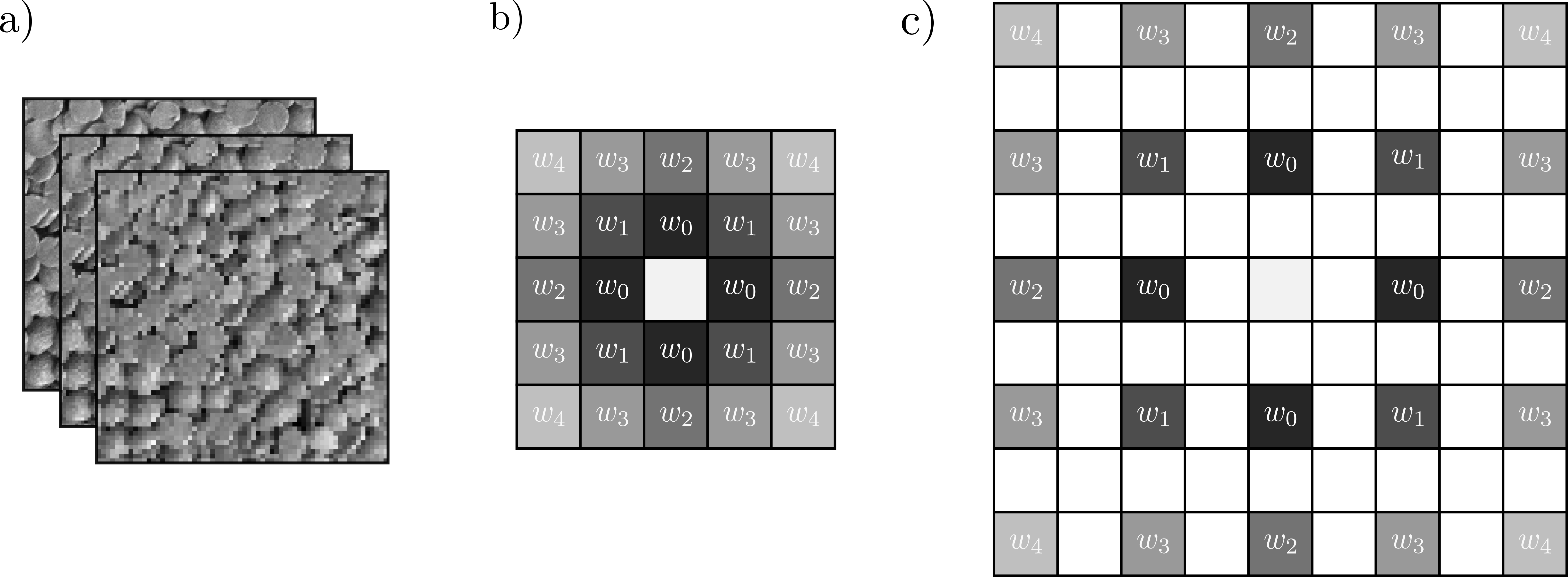

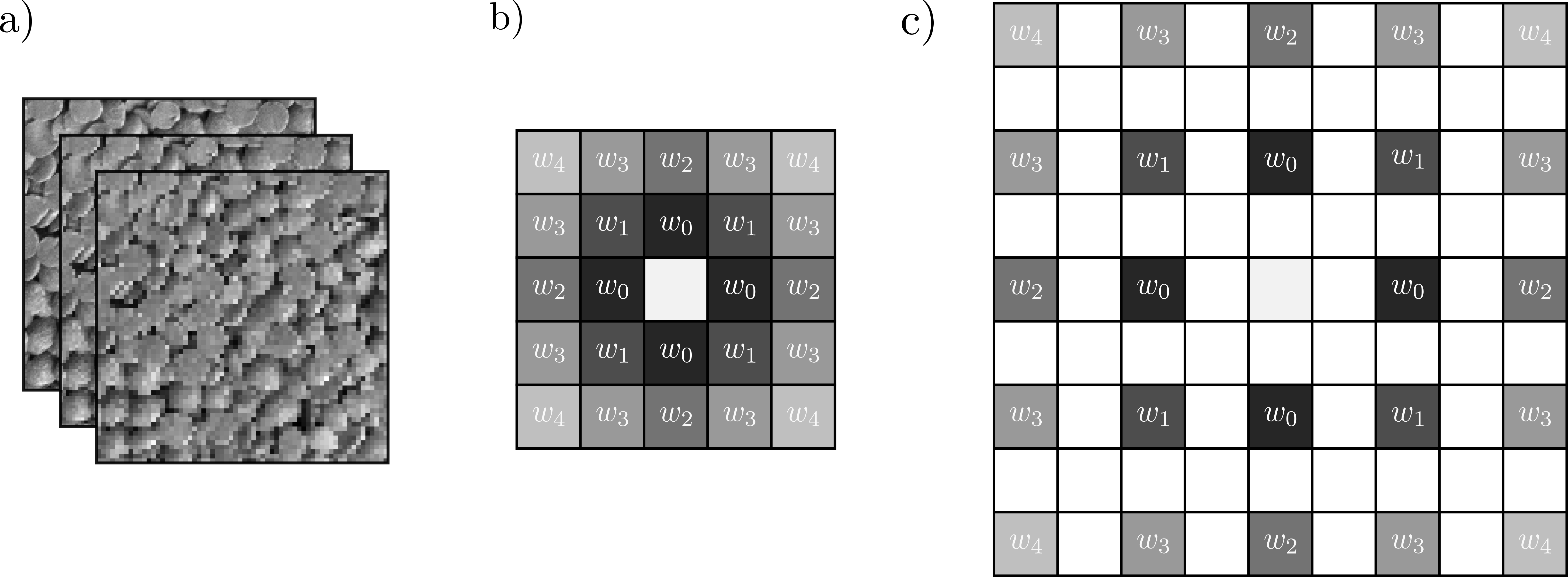

+Multiple convolution kernels are learned to account for various spatial scales in image. This is learned by dialating the kernel.

+

+## Datasets

+

+Kylberg textures. Examples of each class:

+

+

+

+.

+

+.

+

+## Results

+

+The top row is for the model here. The additional rows (CNNs with millions of parameters) were described

+in Andrearczyk & Whelan, 2016.

+

+

+| | Kylberg | CUReT | DTD | kth-tips-2b | ImNet-T | ImNet-S1| ImNet-S2 | ImageNet |

+|:--------------:|:-----------|:-----------|:-----------|:------------|:--------|:--------|:----------|:---------|

+| ConvAR | 99.6 | 93.06 | | 60.36 | | | | |

+| | | | | | | | | |

+| T-CNN-1 (20.8) | 89.5 ± 1.0 | 97.0 ± 1.0 | 20.6 ± 1.4 | 45.7 ± 1.2 | 42.7 | 34.9 | 42.1 | 13.2 |

+| T-CNN-2 (22.1) | 99.2 ± 0.3 | 98.2 ± 0.6 | 24.6 ± 1.0 | 47.3 ± 2.0 | 62.9 | 59.6 | 70.2 | 39.7 |

+| T-CNN-3 (23.4) | 99.2 ± 0.2 | 98.1 ± 1.0 | 27.8 ± 1.2 | 48.7 ± 1.3 | 71.1 | 69.4 | 78.6 | 51.2 |

+| T-CNN-4 (24.7) | 98.8 ± 0.2 | 97.8 ± 0.9 | 25.4 ± 1.3 | 47.2 ± 1.4 | 71.1 | 69.4 | 76.9 | 28.6 |

+| T-CNN-5 (25.1) | 98.1 ± 0.4 | 97.1 ± 1.2 | 19.1 ± 1.8 | 45.9 ± 1.5 | 65.8 | 54.7 | 72.1 | 24.6 |

+| AlexNet (60.9) | 98.9 ± 0.3 | 98.7 ± 0.6 | 22.7 ± 1.3 | 47.6 ± 1.4 | 66.3 | 65.7 | 73.1 | 57.1 |

+

+

+### Citations

+

+Mao, J., & Jain, A. K. (1992). Texture classification and segmentation using multiresolution simultaneous autoregressive models. Pattern recognition, 25(2), 173-188.

+

+Kylberg, G. (2011). Kylberg texture dataset v. 1.0. Centre for Image Analysis, Swedish University of Agricultural Sciences and Uppsala University.

+

+Andrearczyk, V., & Whelan, P. F. (2016). Using filter banks in Convolutional Neural Networks for texture classification. Pattern Recognition Letters, 84, 63–69. https://doi.org/10.1016/j.patrec.2016.08.016

diff --git a/convolutional_ar.egg-info/SOURCES.txt b/convolutional_ar.egg-info/SOURCES.txt

new file mode 100644

index 0000000..1c5a486

--- /dev/null

+++ b/convolutional_ar.egg-info/SOURCES.txt

@@ -0,0 +1,12 @@

+README.md

+setup.py

+convolutional_ar/__init__.py

+convolutional_ar/model.py

+convolutional_ar/psd.py

+convolutional_ar/reshape.py

+convolutional_ar/version.py

+convolutional_ar.egg-info/PKG-INFO

+convolutional_ar.egg-info/SOURCES.txt

+convolutional_ar.egg-info/dependency_links.txt

+convolutional_ar.egg-info/requires.txt

+convolutional_ar.egg-info/top_level.txt

\ No newline at end of file

diff --git a/convolutional_ar.egg-info/dependency_links.txt b/convolutional_ar.egg-info/dependency_links.txt

new file mode 100644

index 0000000..8b13789

--- /dev/null

+++ b/convolutional_ar.egg-info/dependency_links.txt

@@ -0,0 +1 @@

+

diff --git a/convolutional_ar.egg-info/requires.txt b/convolutional_ar.egg-info/requires.txt

new file mode 100644

index 0000000..84ec485

--- /dev/null

+++ b/convolutional_ar.egg-info/requires.txt

@@ -0,0 +1,16 @@

+numba==0.58.1

+numpy==1.26.2

+matplotlib==3.8.2

+scikit-learn==1.3.2

+scipy==1.11.4

+spectrum==0.8.1

+torch==2.1.2

+torchvision==0.16.2

+

+[all]

+pytest

+pytest-cov

+

+[tests]

+pytest

+pytest-cov

diff --git a/convolutional_ar.egg-info/top_level.txt b/convolutional_ar.egg-info/top_level.txt

new file mode 100644

index 0000000..4c45b91

--- /dev/null

+++ b/convolutional_ar.egg-info/top_level.txt

@@ -0,0 +1 @@

+convolutional_ar

diff --git a/convolutional_ar/__pycache__/__init__.cpython-311.pyc b/convolutional_ar/__pycache__/__init__.cpython-311.pyc

new file mode 100644

index 0000000..e65541a

Binary files /dev/null and b/convolutional_ar/__pycache__/__init__.cpython-311.pyc differ

diff --git a/convolutional_ar/__pycache__/model.cpython-311.pyc b/convolutional_ar/__pycache__/model.cpython-311.pyc

new file mode 100644

index 0000000..a435c78

Binary files /dev/null and b/convolutional_ar/__pycache__/model.cpython-311.pyc differ

diff --git a/texture_curet.ipynb b/texture_curet.ipynb

index 77f5781..7e54ed5 100644

--- a/texture_curet.ipynb

+++ b/texture_curet.ipynb

@@ -22,7 +22,7 @@

"from sklearn.model_selection import train_test_split, StratifiedKFold\n",

"from sklearn.metrics import roc_auc_score\n",

"\n",

- "from convolutional_ar.model import ConvolutionalAR"

+ "from convolutional_ar.model import ConvAR"

]

},

{

@@ -62,81 +62,10 @@

},

{

"cell_type": "code",

- "execution_count": 3,

+ "execution_count": null,

"id": "f4e6c26a-7bb2-447c-be94-5329d0844fd1",

"metadata": {},

- "outputs": [

- {

- "data": {

- "application/vnd.jupyter.widget-view+json": {

- "model_id": "355a22db14114a9483815f6446f2c881",

- "version_major": 2,

- "version_minor": 0

- },

- "text/plain": [

- " 0%| | 0/5612 [00:00"

- ]

- },

- "metadata": {},

- "output_type": "display_data"

- }

- ],

+ "outputs": [],

"source": [

"classes = {f\"sample_{i}\": i for i in range(61)}\n",

"\n",

@@ -245,19 +154,11 @@

" axes[i].get_legend().remove()\n",

" axes[i].set_title(f\"{c}\")"

]

- },

- {

- "cell_type": "code",

- "execution_count": null,

- "id": "0549d7fe-31fb-4705-9be9-2b66bc3154ff",

- "metadata": {},

- "outputs": [],

- "source": []

}

],

"metadata": {

"kernelspec": {

- "display_name": "Python 3 (ipykernel)",

+ "display_name": "convolutional_ar",

"language": "python",

"name": "python3"

},

@@ -271,7 +172,7 @@

"name": "python",

"nbconvert_exporter": "python",

"pygments_lexer": "ipython3",

- "version": "3.11.5"

+ "version": "3.11.14"

}

},

"nbformat": 4,

diff --git a/texture_kth-tips2-b.ipynb b/texture_kth-tips2-b.ipynb

index cb3e6bb..574d149 100644

--- a/texture_kth-tips2-b.ipynb

+++ b/texture_kth-tips2-b.ipynb

@@ -22,7 +22,7 @@

"from sklearn.model_selection import train_test_split, StratifiedKFold\n",

"from sklearn.metrics import roc_auc_score\n",

"\n",

- "from convolutional_ar.model import ConvolutionalAR"

+ "from convolutional_ar.model import ConvAR"

]

},

{

@@ -49,31 +49,10 @@

},

{

"cell_type": "code",

- "execution_count": 3,

+ "execution_count": null,

"id": "d8a22e29-13bc-4ec0-a4f9-47b2bb3a2482",

"metadata": {},

- "outputs": [

- {

- "data": {

- "text/plain": [

- "{'aluminium_foil': 0,\n",

- " 'brown_bread': 1,\n",

- " 'corduroy': 2,\n",

- " 'cork': 3,\n",

- " 'cotton': 4,\n",

- " 'cracker': 5,\n",

- " 'lettuce_leaf': 6,\n",

- " 'linen': 7,\n",

- " 'white_bread': 8,\n",

- " 'wood': 9,\n",

- " 'wool': 10}"

- ]

- },

- "execution_count": 3,

- "metadata": {},

- "output_type": "execute_result"

- }

- ],

+ "outputs": [],

"source": [

"classes = [\n",

" 'aluminium_foil',\n",

@@ -95,25 +74,10 @@

},

{

"cell_type": "code",

- "execution_count": 4,

+ "execution_count": null,

"id": "6ab4c8e4-7fef-4e21-af6f-f36528ba939f",

"metadata": {},

- "outputs": [

- {

- "data": {

- "application/vnd.jupyter.widget-view+json": {

- "model_id": "2a1fc8759c334e5c9cfd39eaeeccc363",

- "version_major": 2,

- "version_minor": 0

- },

- "text/plain": [

- " 0%| | 0/11 [00:00"

- ]

- },

- "metadata": {},

- "output_type": "display_data"

- }

- ],

+ "outputs": [],

"source": [

"# Plot AUC\n",

"from sklearn.metrics import RocCurveDisplay\n",

@@ -271,7 +200,7 @@

],

"metadata": {

"kernelspec": {

- "display_name": "Python 3 (ipykernel)",

+ "display_name": "convolutional_ar",

"language": "python",

"name": "python3"

},

@@ -285,7 +214,7 @@

"name": "python",

"nbconvert_exporter": "python",

"pygments_lexer": "ipython3",

- "version": "3.11.5"

+ "version": "3.11.14"

}

},

"nbformat": 4,

diff --git a/texture_kylberg.ipynb b/texture_kylberg.ipynb

index 6834cc3..4de9691 100644

--- a/texture_kylberg.ipynb

+++ b/texture_kylberg.ipynb

@@ -22,7 +22,7 @@

"from sklearn.model_selection import train_test_split, StratifiedKFold\n",

"from sklearn.metrics import roc_auc_score\n",

"\n",

- "from convolutional_ar.model import ConvolutionalAR"

+ "from convolutional_ar.model import ConvAR"

]

},

{

@@ -46,7 +46,7 @@

},

{

"cell_type": "code",

- "execution_count": 2,

+ "execution_count": null,

"id": "031422b1-4c1e-44c9-ba6c-92a3d7b976e3",

"metadata": {},

"outputs": [],

@@ -92,81 +92,10 @@

},

{

"cell_type": "code",

- "execution_count": 3,

+ "execution_count": null,

"id": "f4e6c26a-7bb2-447c-be94-5329d0844fd1",

"metadata": {},

- "outputs": [

- {

- "data": {

- "application/vnd.jupyter.widget-view+json": {

- "model_id": "e3f9d87f31784d21ae1581e052673e80",

- "version_major": 2,

- "version_minor": 0

- },

- "text/plain": [

- " 0%| | 0/4480 [00:00"

- ]

- },

- "metadata": {},

- "output_type": "display_data"

- }

- ],

+ "outputs": [],

"source": [

"# Plot examples\n",

"from sklearn.metrics import RocCurveDisplay\n",

@@ -278,7 +187,7 @@

],

"metadata": {

"kernelspec": {

- "display_name": "Python 3 (ipykernel)",

+ "display_name": "convolutional_ar",

"language": "python",

"name": "python3"

},

@@ -292,7 +201,7 @@

"name": "python",

"nbconvert_exporter": "python",

"pygments_lexer": "ipython3",

- "version": "3.11.5"

+ "version": "3.11.14"

}

},

"nbformat": 4,

diff --git a/vectors_kth.npy b/vectors_kth.npy

new file mode 100644

index 0000000..ad47089

Binary files /dev/null and b/vectors_kth.npy differ

+

+Multiple convolution kernels are learned to account for various spatial scales in image. This is learned by dialating the kernel.

+

+## Datasets

+

+Kylberg textures. Examples of each class:

+

+

+

+.

+

+.

+

+## Results

+

+The top row is for the model here. The additional rows (CNNs with millions of parameters) were described

+in Andrearczyk & Whelan, 2016.

+

+

+| | Kylberg | CUReT | DTD | kth-tips-2b | ImNet-T | ImNet-S1| ImNet-S2 | ImageNet |

+|:--------------:|:-----------|:-----------|:-----------|:------------|:--------|:--------|:----------|:---------|

+| ConvAR | 99.6 | 93.06 | | 60.36 | | | | |

+| | | | | | | | | |

+| T-CNN-1 (20.8) | 89.5 ± 1.0 | 97.0 ± 1.0 | 20.6 ± 1.4 | 45.7 ± 1.2 | 42.7 | 34.9 | 42.1 | 13.2 |

+| T-CNN-2 (22.1) | 99.2 ± 0.3 | 98.2 ± 0.6 | 24.6 ± 1.0 | 47.3 ± 2.0 | 62.9 | 59.6 | 70.2 | 39.7 |

+| T-CNN-3 (23.4) | 99.2 ± 0.2 | 98.1 ± 1.0 | 27.8 ± 1.2 | 48.7 ± 1.3 | 71.1 | 69.4 | 78.6 | 51.2 |

+| T-CNN-4 (24.7) | 98.8 ± 0.2 | 97.8 ± 0.9 | 25.4 ± 1.3 | 47.2 ± 1.4 | 71.1 | 69.4 | 76.9 | 28.6 |

+| T-CNN-5 (25.1) | 98.1 ± 0.4 | 97.1 ± 1.2 | 19.1 ± 1.8 | 45.9 ± 1.5 | 65.8 | 54.7 | 72.1 | 24.6 |

+| AlexNet (60.9) | 98.9 ± 0.3 | 98.7 ± 0.6 | 22.7 ± 1.3 | 47.6 ± 1.4 | 66.3 | 65.7 | 73.1 | 57.1 |

+

+

+### Citations

+

+Mao, J., & Jain, A. K. (1992). Texture classification and segmentation using multiresolution simultaneous autoregressive models. Pattern recognition, 25(2), 173-188.

+

+Kylberg, G. (2011). Kylberg texture dataset v. 1.0. Centre for Image Analysis, Swedish University of Agricultural Sciences and Uppsala University.

+

+Andrearczyk, V., & Whelan, P. F. (2016). Using filter banks in Convolutional Neural Networks for texture classification. Pattern Recognition Letters, 84, 63–69. https://doi.org/10.1016/j.patrec.2016.08.016

diff --git a/convolutional_ar.egg-info/SOURCES.txt b/convolutional_ar.egg-info/SOURCES.txt

new file mode 100644

index 0000000..1c5a486

--- /dev/null

+++ b/convolutional_ar.egg-info/SOURCES.txt

@@ -0,0 +1,12 @@

+README.md

+setup.py

+convolutional_ar/__init__.py

+convolutional_ar/model.py

+convolutional_ar/psd.py

+convolutional_ar/reshape.py

+convolutional_ar/version.py

+convolutional_ar.egg-info/PKG-INFO

+convolutional_ar.egg-info/SOURCES.txt

+convolutional_ar.egg-info/dependency_links.txt

+convolutional_ar.egg-info/requires.txt

+convolutional_ar.egg-info/top_level.txt

\ No newline at end of file

diff --git a/convolutional_ar.egg-info/dependency_links.txt b/convolutional_ar.egg-info/dependency_links.txt

new file mode 100644

index 0000000..8b13789

--- /dev/null

+++ b/convolutional_ar.egg-info/dependency_links.txt

@@ -0,0 +1 @@

+

diff --git a/convolutional_ar.egg-info/requires.txt b/convolutional_ar.egg-info/requires.txt

new file mode 100644

index 0000000..84ec485

--- /dev/null

+++ b/convolutional_ar.egg-info/requires.txt

@@ -0,0 +1,16 @@

+numba==0.58.1

+numpy==1.26.2

+matplotlib==3.8.2

+scikit-learn==1.3.2

+scipy==1.11.4

+spectrum==0.8.1

+torch==2.1.2

+torchvision==0.16.2

+

+[all]

+pytest

+pytest-cov

+

+[tests]

+pytest

+pytest-cov

diff --git a/convolutional_ar.egg-info/top_level.txt b/convolutional_ar.egg-info/top_level.txt

new file mode 100644

index 0000000..4c45b91

--- /dev/null

+++ b/convolutional_ar.egg-info/top_level.txt

@@ -0,0 +1 @@

+convolutional_ar

diff --git a/convolutional_ar/__pycache__/__init__.cpython-311.pyc b/convolutional_ar/__pycache__/__init__.cpython-311.pyc

new file mode 100644

index 0000000..e65541a

Binary files /dev/null and b/convolutional_ar/__pycache__/__init__.cpython-311.pyc differ

diff --git a/convolutional_ar/__pycache__/model.cpython-311.pyc b/convolutional_ar/__pycache__/model.cpython-311.pyc

new file mode 100644

index 0000000..a435c78

Binary files /dev/null and b/convolutional_ar/__pycache__/model.cpython-311.pyc differ

diff --git a/texture_curet.ipynb b/texture_curet.ipynb

index 77f5781..7e54ed5 100644

--- a/texture_curet.ipynb

+++ b/texture_curet.ipynb

@@ -22,7 +22,7 @@

"from sklearn.model_selection import train_test_split, StratifiedKFold\n",

"from sklearn.metrics import roc_auc_score\n",

"\n",

- "from convolutional_ar.model import ConvolutionalAR"

+ "from convolutional_ar.model import ConvAR"

]

},

{

@@ -62,81 +62,10 @@

},

{

"cell_type": "code",

- "execution_count": 3,

+ "execution_count": null,

"id": "f4e6c26a-7bb2-447c-be94-5329d0844fd1",

"metadata": {},

- "outputs": [

- {

- "data": {

- "application/vnd.jupyter.widget-view+json": {

- "model_id": "355a22db14114a9483815f6446f2c881",

- "version_major": 2,

- "version_minor": 0

- },

- "text/plain": [

- " 0%| | 0/5612 [00:00"

- ]

- },

- "metadata": {},

- "output_type": "display_data"

- }

- ],

+ "outputs": [],

"source": [

"classes = {f\"sample_{i}\": i for i in range(61)}\n",

"\n",

@@ -245,19 +154,11 @@

" axes[i].get_legend().remove()\n",

" axes[i].set_title(f\"{c}\")"

]

- },

- {

- "cell_type": "code",

- "execution_count": null,

- "id": "0549d7fe-31fb-4705-9be9-2b66bc3154ff",

- "metadata": {},

- "outputs": [],

- "source": []

}

],

"metadata": {

"kernelspec": {

- "display_name": "Python 3 (ipykernel)",

+ "display_name": "convolutional_ar",

"language": "python",

"name": "python3"

},

@@ -271,7 +172,7 @@

"name": "python",

"nbconvert_exporter": "python",

"pygments_lexer": "ipython3",

- "version": "3.11.5"

+ "version": "3.11.14"

}

},

"nbformat": 4,

diff --git a/texture_kth-tips2-b.ipynb b/texture_kth-tips2-b.ipynb

index cb3e6bb..574d149 100644

--- a/texture_kth-tips2-b.ipynb

+++ b/texture_kth-tips2-b.ipynb

@@ -22,7 +22,7 @@

"from sklearn.model_selection import train_test_split, StratifiedKFold\n",

"from sklearn.metrics import roc_auc_score\n",

"\n",

- "from convolutional_ar.model import ConvolutionalAR"

+ "from convolutional_ar.model import ConvAR"

]

},

{

@@ -49,31 +49,10 @@

},

{

"cell_type": "code",

- "execution_count": 3,

+ "execution_count": null,

"id": "d8a22e29-13bc-4ec0-a4f9-47b2bb3a2482",

"metadata": {},

- "outputs": [

- {

- "data": {

- "text/plain": [

- "{'aluminium_foil': 0,\n",

- " 'brown_bread': 1,\n",

- " 'corduroy': 2,\n",

- " 'cork': 3,\n",

- " 'cotton': 4,\n",

- " 'cracker': 5,\n",

- " 'lettuce_leaf': 6,\n",

- " 'linen': 7,\n",

- " 'white_bread': 8,\n",

- " 'wood': 9,\n",

- " 'wool': 10}"

- ]

- },

- "execution_count": 3,

- "metadata": {},

- "output_type": "execute_result"

- }

- ],

+ "outputs": [],

"source": [

"classes = [\n",

" 'aluminium_foil',\n",

@@ -95,25 +74,10 @@

},

{

"cell_type": "code",

- "execution_count": 4,

+ "execution_count": null,

"id": "6ab4c8e4-7fef-4e21-af6f-f36528ba939f",

"metadata": {},

- "outputs": [

- {

- "data": {

- "application/vnd.jupyter.widget-view+json": {

- "model_id": "2a1fc8759c334e5c9cfd39eaeeccc363",

- "version_major": 2,

- "version_minor": 0

- },

- "text/plain": [

- " 0%| | 0/11 [00:00"

- ]

- },

- "metadata": {},

- "output_type": "display_data"

- }

- ],

+ "outputs": [],

"source": [

"# Plot AUC\n",

"from sklearn.metrics import RocCurveDisplay\n",

@@ -271,7 +200,7 @@

],

"metadata": {

"kernelspec": {

- "display_name": "Python 3 (ipykernel)",

+ "display_name": "convolutional_ar",

"language": "python",

"name": "python3"

},

@@ -285,7 +214,7 @@

"name": "python",

"nbconvert_exporter": "python",

"pygments_lexer": "ipython3",

- "version": "3.11.5"

+ "version": "3.11.14"

}

},

"nbformat": 4,

diff --git a/texture_kylberg.ipynb b/texture_kylberg.ipynb

index 6834cc3..4de9691 100644

--- a/texture_kylberg.ipynb

+++ b/texture_kylberg.ipynb

@@ -22,7 +22,7 @@

"from sklearn.model_selection import train_test_split, StratifiedKFold\n",

"from sklearn.metrics import roc_auc_score\n",

"\n",

- "from convolutional_ar.model import ConvolutionalAR"

+ "from convolutional_ar.model import ConvAR"

]

},

{

@@ -46,7 +46,7 @@

},

{

"cell_type": "code",

- "execution_count": 2,

+ "execution_count": null,

"id": "031422b1-4c1e-44c9-ba6c-92a3d7b976e3",

"metadata": {},

"outputs": [],

@@ -92,81 +92,10 @@

},

{

"cell_type": "code",

- "execution_count": 3,

+ "execution_count": null,

"id": "f4e6c26a-7bb2-447c-be94-5329d0844fd1",

"metadata": {},

- "outputs": [

- {

- "data": {

- "application/vnd.jupyter.widget-view+json": {

- "model_id": "e3f9d87f31784d21ae1581e052673e80",

- "version_major": 2,

- "version_minor": 0

- },

- "text/plain": [

- " 0%| | 0/4480 [00:00"

- ]

- },

- "metadata": {},

- "output_type": "display_data"

- }

- ],

+ "outputs": [],

"source": [

"# Plot examples\n",

"from sklearn.metrics import RocCurveDisplay\n",

@@ -278,7 +187,7 @@

],

"metadata": {

"kernelspec": {

- "display_name": "Python 3 (ipykernel)",

+ "display_name": "convolutional_ar",

"language": "python",

"name": "python3"

},

@@ -292,7 +201,7 @@

"name": "python",

"nbconvert_exporter": "python",

"pygments_lexer": "ipython3",

- "version": "3.11.5"

+ "version": "3.11.14"

}

},

"nbformat": 4,

diff --git a/vectors_kth.npy b/vectors_kth.npy

new file mode 100644

index 0000000..ad47089

Binary files /dev/null and b/vectors_kth.npy differ

+

+The weights of a convolution kernel are optimized to best predict the center pixel of each window, $\mathbf{X}_i$. The weights of the kernel are constrained based on distance from the center, e.g. the first three weights, $\{w_0, w_1, w_2\}$, correspond to indices in the kernel with distances $\{1, \sqrt{2}, 2\}$ from the center pixel. Convolution is the Frobenius inner product between the image and kernel, optimized to best predict the center pixel, $c_i \in \mathbf{X}$.

+

+

+

+The weights of a convolution kernel are optimized to best predict the center pixel of each window, $\mathbf{X}_i$. The weights of the kernel are constrained based on distance from the center, e.g. the first three weights, $\{w_0, w_1, w_2\}$, correspond to indices in the kernel with distances $\{1, \sqrt{2}, 2\}$ from the center pixel. Convolution is the Frobenius inner product between the image and kernel, optimized to best predict the center pixel, $c_i \in \mathbf{X}$.

+

+ +

+Multiple convolution kernels are learned to account for various spatial scales in image. This is learned by dialating the kernel.

+

+## Datasets

+

+Kylberg textures. Examples of each class:

+

+

+

+.

+

+.

+

+## Results

+

+The top row is for the model here. The additional rows (CNNs with millions of parameters) were described

+in Andrearczyk & Whelan, 2016.

+

+

+| | Kylberg | CUReT | DTD | kth-tips-2b | ImNet-T | ImNet-S1| ImNet-S2 | ImageNet |

+|:--------------:|:-----------|:-----------|:-----------|:------------|:--------|:--------|:----------|:---------|

+| ConvAR | 99.6 | 93.06 | | 60.36 | | | | |

+| | | | | | | | | |

+| T-CNN-1 (20.8) | 89.5 ± 1.0 | 97.0 ± 1.0 | 20.6 ± 1.4 | 45.7 ± 1.2 | 42.7 | 34.9 | 42.1 | 13.2 |

+| T-CNN-2 (22.1) | 99.2 ± 0.3 | 98.2 ± 0.6 | 24.6 ± 1.0 | 47.3 ± 2.0 | 62.9 | 59.6 | 70.2 | 39.7 |

+| T-CNN-3 (23.4) | 99.2 ± 0.2 | 98.1 ± 1.0 | 27.8 ± 1.2 | 48.7 ± 1.3 | 71.1 | 69.4 | 78.6 | 51.2 |

+| T-CNN-4 (24.7) | 98.8 ± 0.2 | 97.8 ± 0.9 | 25.4 ± 1.3 | 47.2 ± 1.4 | 71.1 | 69.4 | 76.9 | 28.6 |

+| T-CNN-5 (25.1) | 98.1 ± 0.4 | 97.1 ± 1.2 | 19.1 ± 1.8 | 45.9 ± 1.5 | 65.8 | 54.7 | 72.1 | 24.6 |

+| AlexNet (60.9) | 98.9 ± 0.3 | 98.7 ± 0.6 | 22.7 ± 1.3 | 47.6 ± 1.4 | 66.3 | 65.7 | 73.1 | 57.1 |

+

+

+### Citations

+

+Mao, J., & Jain, A. K. (1992). Texture classification and segmentation using multiresolution simultaneous autoregressive models. Pattern recognition, 25(2), 173-188.

+

+Kylberg, G. (2011). Kylberg texture dataset v. 1.0. Centre for Image Analysis, Swedish University of Agricultural Sciences and Uppsala University.

+

+Andrearczyk, V., & Whelan, P. F. (2016). Using filter banks in Convolutional Neural Networks for texture classification. Pattern Recognition Letters, 84, 63–69. https://doi.org/10.1016/j.patrec.2016.08.016

diff --git a/convolutional_ar.egg-info/SOURCES.txt b/convolutional_ar.egg-info/SOURCES.txt

new file mode 100644

index 0000000..1c5a486

--- /dev/null

+++ b/convolutional_ar.egg-info/SOURCES.txt

@@ -0,0 +1,12 @@

+README.md

+setup.py

+convolutional_ar/__init__.py

+convolutional_ar/model.py

+convolutional_ar/psd.py

+convolutional_ar/reshape.py

+convolutional_ar/version.py

+convolutional_ar.egg-info/PKG-INFO

+convolutional_ar.egg-info/SOURCES.txt

+convolutional_ar.egg-info/dependency_links.txt

+convolutional_ar.egg-info/requires.txt

+convolutional_ar.egg-info/top_level.txt

\ No newline at end of file

diff --git a/convolutional_ar.egg-info/dependency_links.txt b/convolutional_ar.egg-info/dependency_links.txt

new file mode 100644

index 0000000..8b13789

--- /dev/null

+++ b/convolutional_ar.egg-info/dependency_links.txt

@@ -0,0 +1 @@

+

diff --git a/convolutional_ar.egg-info/requires.txt b/convolutional_ar.egg-info/requires.txt

new file mode 100644

index 0000000..84ec485

--- /dev/null

+++ b/convolutional_ar.egg-info/requires.txt

@@ -0,0 +1,16 @@

+numba==0.58.1

+numpy==1.26.2

+matplotlib==3.8.2

+scikit-learn==1.3.2

+scipy==1.11.4

+spectrum==0.8.1

+torch==2.1.2

+torchvision==0.16.2

+

+[all]

+pytest

+pytest-cov

+

+[tests]

+pytest

+pytest-cov

diff --git a/convolutional_ar.egg-info/top_level.txt b/convolutional_ar.egg-info/top_level.txt

new file mode 100644

index 0000000..4c45b91

--- /dev/null

+++ b/convolutional_ar.egg-info/top_level.txt

@@ -0,0 +1 @@

+convolutional_ar

diff --git a/convolutional_ar/__pycache__/__init__.cpython-311.pyc b/convolutional_ar/__pycache__/__init__.cpython-311.pyc

new file mode 100644

index 0000000..e65541a

Binary files /dev/null and b/convolutional_ar/__pycache__/__init__.cpython-311.pyc differ

diff --git a/convolutional_ar/__pycache__/model.cpython-311.pyc b/convolutional_ar/__pycache__/model.cpython-311.pyc

new file mode 100644

index 0000000..a435c78

Binary files /dev/null and b/convolutional_ar/__pycache__/model.cpython-311.pyc differ

diff --git a/texture_curet.ipynb b/texture_curet.ipynb

index 77f5781..7e54ed5 100644

--- a/texture_curet.ipynb

+++ b/texture_curet.ipynb

@@ -22,7 +22,7 @@

"from sklearn.model_selection import train_test_split, StratifiedKFold\n",

"from sklearn.metrics import roc_auc_score\n",

"\n",

- "from convolutional_ar.model import ConvolutionalAR"

+ "from convolutional_ar.model import ConvAR"

]

},

{

@@ -62,81 +62,10 @@

},

{

"cell_type": "code",

- "execution_count": 3,

+ "execution_count": null,

"id": "f4e6c26a-7bb2-447c-be94-5329d0844fd1",

"metadata": {},

- "outputs": [

- {

- "data": {

- "application/vnd.jupyter.widget-view+json": {

- "model_id": "355a22db14114a9483815f6446f2c881",

- "version_major": 2,

- "version_minor": 0

- },

- "text/plain": [

- " 0%| | 0/5612 [00:00"

- ]

- },

- "metadata": {},

- "output_type": "display_data"

- }

- ],

+ "outputs": [],

"source": [

"classes = {f\"sample_{i}\": i for i in range(61)}\n",

"\n",

@@ -245,19 +154,11 @@

" axes[i].get_legend().remove()\n",

" axes[i].set_title(f\"{c}\")"

]

- },

- {

- "cell_type": "code",

- "execution_count": null,

- "id": "0549d7fe-31fb-4705-9be9-2b66bc3154ff",

- "metadata": {},

- "outputs": [],

- "source": []

}

],

"metadata": {

"kernelspec": {

- "display_name": "Python 3 (ipykernel)",

+ "display_name": "convolutional_ar",

"language": "python",

"name": "python3"

},

@@ -271,7 +172,7 @@

"name": "python",

"nbconvert_exporter": "python",

"pygments_lexer": "ipython3",

- "version": "3.11.5"

+ "version": "3.11.14"

}

},

"nbformat": 4,

diff --git a/texture_kth-tips2-b.ipynb b/texture_kth-tips2-b.ipynb

index cb3e6bb..574d149 100644

--- a/texture_kth-tips2-b.ipynb

+++ b/texture_kth-tips2-b.ipynb

@@ -22,7 +22,7 @@

"from sklearn.model_selection import train_test_split, StratifiedKFold\n",

"from sklearn.metrics import roc_auc_score\n",

"\n",

- "from convolutional_ar.model import ConvolutionalAR"

+ "from convolutional_ar.model import ConvAR"

]

},

{

@@ -49,31 +49,10 @@

},

{

"cell_type": "code",

- "execution_count": 3,

+ "execution_count": null,

"id": "d8a22e29-13bc-4ec0-a4f9-47b2bb3a2482",

"metadata": {},

- "outputs": [

- {

- "data": {

- "text/plain": [

- "{'aluminium_foil': 0,\n",

- " 'brown_bread': 1,\n",

- " 'corduroy': 2,\n",

- " 'cork': 3,\n",

- " 'cotton': 4,\n",

- " 'cracker': 5,\n",

- " 'lettuce_leaf': 6,\n",

- " 'linen': 7,\n",

- " 'white_bread': 8,\n",

- " 'wood': 9,\n",

- " 'wool': 10}"

- ]

- },

- "execution_count": 3,

- "metadata": {},

- "output_type": "execute_result"

- }

- ],

+ "outputs": [],

"source": [

"classes = [\n",

" 'aluminium_foil',\n",

@@ -95,25 +74,10 @@

},

{

"cell_type": "code",

- "execution_count": 4,

+ "execution_count": null,

"id": "6ab4c8e4-7fef-4e21-af6f-f36528ba939f",

"metadata": {},

- "outputs": [

- {

- "data": {

- "application/vnd.jupyter.widget-view+json": {

- "model_id": "2a1fc8759c334e5c9cfd39eaeeccc363",

- "version_major": 2,

- "version_minor": 0

- },

- "text/plain": [

- " 0%| | 0/11 [00:00"

- ]

- },

- "metadata": {},

- "output_type": "display_data"

- }

- ],

+ "outputs": [],

"source": [

"# Plot AUC\n",

"from sklearn.metrics import RocCurveDisplay\n",

@@ -271,7 +200,7 @@

],

"metadata": {

"kernelspec": {

- "display_name": "Python 3 (ipykernel)",

+ "display_name": "convolutional_ar",

"language": "python",

"name": "python3"

},

@@ -285,7 +214,7 @@

"name": "python",

"nbconvert_exporter": "python",

"pygments_lexer": "ipython3",

- "version": "3.11.5"

+ "version": "3.11.14"

}

},

"nbformat": 4,

diff --git a/texture_kylberg.ipynb b/texture_kylberg.ipynb

index 6834cc3..4de9691 100644

--- a/texture_kylberg.ipynb

+++ b/texture_kylberg.ipynb

@@ -22,7 +22,7 @@

"from sklearn.model_selection import train_test_split, StratifiedKFold\n",

"from sklearn.metrics import roc_auc_score\n",

"\n",

- "from convolutional_ar.model import ConvolutionalAR"

+ "from convolutional_ar.model import ConvAR"

]

},

{

@@ -46,7 +46,7 @@

},

{

"cell_type": "code",

- "execution_count": 2,

+ "execution_count": null,

"id": "031422b1-4c1e-44c9-ba6c-92a3d7b976e3",

"metadata": {},

"outputs": [],

@@ -92,81 +92,10 @@

},

{

"cell_type": "code",

- "execution_count": 3,

+ "execution_count": null,

"id": "f4e6c26a-7bb2-447c-be94-5329d0844fd1",

"metadata": {},

- "outputs": [

- {

- "data": {

- "application/vnd.jupyter.widget-view+json": {

- "model_id": "e3f9d87f31784d21ae1581e052673e80",

- "version_major": 2,

- "version_minor": 0

- },

- "text/plain": [

- " 0%| | 0/4480 [00:00"

- ]

- },

- "metadata": {},

- "output_type": "display_data"

- }

- ],

+ "outputs": [],

"source": [

"# Plot examples\n",

"from sklearn.metrics import RocCurveDisplay\n",

@@ -278,7 +187,7 @@

],

"metadata": {

"kernelspec": {

- "display_name": "Python 3 (ipykernel)",

+ "display_name": "convolutional_ar",

"language": "python",

"name": "python3"

},

@@ -292,7 +201,7 @@

"name": "python",

"nbconvert_exporter": "python",

"pygments_lexer": "ipython3",

- "version": "3.11.5"

+ "version": "3.11.14"

}

},

"nbformat": 4,

diff --git a/vectors_kth.npy b/vectors_kth.npy

new file mode 100644

index 0000000..ad47089

Binary files /dev/null and b/vectors_kth.npy differ

+

+Multiple convolution kernels are learned to account for various spatial scales in image. This is learned by dialating the kernel.

+

+## Datasets

+

+Kylberg textures. Examples of each class:

+

+

+

+.

+

+.

+

+## Results

+

+The top row is for the model here. The additional rows (CNNs with millions of parameters) were described

+in Andrearczyk & Whelan, 2016.

+

+

+| | Kylberg | CUReT | DTD | kth-tips-2b | ImNet-T | ImNet-S1| ImNet-S2 | ImageNet |

+|:--------------:|:-----------|:-----------|:-----------|:------------|:--------|:--------|:----------|:---------|

+| ConvAR | 99.6 | 93.06 | | 60.36 | | | | |

+| | | | | | | | | |

+| T-CNN-1 (20.8) | 89.5 ± 1.0 | 97.0 ± 1.0 | 20.6 ± 1.4 | 45.7 ± 1.2 | 42.7 | 34.9 | 42.1 | 13.2 |

+| T-CNN-2 (22.1) | 99.2 ± 0.3 | 98.2 ± 0.6 | 24.6 ± 1.0 | 47.3 ± 2.0 | 62.9 | 59.6 | 70.2 | 39.7 |

+| T-CNN-3 (23.4) | 99.2 ± 0.2 | 98.1 ± 1.0 | 27.8 ± 1.2 | 48.7 ± 1.3 | 71.1 | 69.4 | 78.6 | 51.2 |

+| T-CNN-4 (24.7) | 98.8 ± 0.2 | 97.8 ± 0.9 | 25.4 ± 1.3 | 47.2 ± 1.4 | 71.1 | 69.4 | 76.9 | 28.6 |

+| T-CNN-5 (25.1) | 98.1 ± 0.4 | 97.1 ± 1.2 | 19.1 ± 1.8 | 45.9 ± 1.5 | 65.8 | 54.7 | 72.1 | 24.6 |

+| AlexNet (60.9) | 98.9 ± 0.3 | 98.7 ± 0.6 | 22.7 ± 1.3 | 47.6 ± 1.4 | 66.3 | 65.7 | 73.1 | 57.1 |

+

+

+### Citations

+

+Mao, J., & Jain, A. K. (1992). Texture classification and segmentation using multiresolution simultaneous autoregressive models. Pattern recognition, 25(2), 173-188.

+

+Kylberg, G. (2011). Kylberg texture dataset v. 1.0. Centre for Image Analysis, Swedish University of Agricultural Sciences and Uppsala University.

+

+Andrearczyk, V., & Whelan, P. F. (2016). Using filter banks in Convolutional Neural Networks for texture classification. Pattern Recognition Letters, 84, 63–69. https://doi.org/10.1016/j.patrec.2016.08.016

diff --git a/convolutional_ar.egg-info/SOURCES.txt b/convolutional_ar.egg-info/SOURCES.txt

new file mode 100644

index 0000000..1c5a486

--- /dev/null

+++ b/convolutional_ar.egg-info/SOURCES.txt

@@ -0,0 +1,12 @@

+README.md

+setup.py

+convolutional_ar/__init__.py

+convolutional_ar/model.py

+convolutional_ar/psd.py

+convolutional_ar/reshape.py

+convolutional_ar/version.py

+convolutional_ar.egg-info/PKG-INFO

+convolutional_ar.egg-info/SOURCES.txt

+convolutional_ar.egg-info/dependency_links.txt

+convolutional_ar.egg-info/requires.txt

+convolutional_ar.egg-info/top_level.txt

\ No newline at end of file

diff --git a/convolutional_ar.egg-info/dependency_links.txt b/convolutional_ar.egg-info/dependency_links.txt

new file mode 100644

index 0000000..8b13789

--- /dev/null

+++ b/convolutional_ar.egg-info/dependency_links.txt

@@ -0,0 +1 @@

+

diff --git a/convolutional_ar.egg-info/requires.txt b/convolutional_ar.egg-info/requires.txt

new file mode 100644

index 0000000..84ec485

--- /dev/null

+++ b/convolutional_ar.egg-info/requires.txt

@@ -0,0 +1,16 @@

+numba==0.58.1

+numpy==1.26.2

+matplotlib==3.8.2

+scikit-learn==1.3.2

+scipy==1.11.4

+spectrum==0.8.1

+torch==2.1.2

+torchvision==0.16.2

+

+[all]

+pytest

+pytest-cov

+

+[tests]

+pytest

+pytest-cov

diff --git a/convolutional_ar.egg-info/top_level.txt b/convolutional_ar.egg-info/top_level.txt

new file mode 100644

index 0000000..4c45b91

--- /dev/null

+++ b/convolutional_ar.egg-info/top_level.txt

@@ -0,0 +1 @@

+convolutional_ar

diff --git a/convolutional_ar/__pycache__/__init__.cpython-311.pyc b/convolutional_ar/__pycache__/__init__.cpython-311.pyc

new file mode 100644

index 0000000..e65541a

Binary files /dev/null and b/convolutional_ar/__pycache__/__init__.cpython-311.pyc differ

diff --git a/convolutional_ar/__pycache__/model.cpython-311.pyc b/convolutional_ar/__pycache__/model.cpython-311.pyc

new file mode 100644

index 0000000..a435c78

Binary files /dev/null and b/convolutional_ar/__pycache__/model.cpython-311.pyc differ

diff --git a/texture_curet.ipynb b/texture_curet.ipynb

index 77f5781..7e54ed5 100644

--- a/texture_curet.ipynb

+++ b/texture_curet.ipynb

@@ -22,7 +22,7 @@

"from sklearn.model_selection import train_test_split, StratifiedKFold\n",

"from sklearn.metrics import roc_auc_score\n",

"\n",

- "from convolutional_ar.model import ConvolutionalAR"

+ "from convolutional_ar.model import ConvAR"

]

},

{

@@ -62,81 +62,10 @@

},

{

"cell_type": "code",

- "execution_count": 3,

+ "execution_count": null,

"id": "f4e6c26a-7bb2-447c-be94-5329d0844fd1",

"metadata": {},

- "outputs": [

- {

- "data": {

- "application/vnd.jupyter.widget-view+json": {

- "model_id": "355a22db14114a9483815f6446f2c881",

- "version_major": 2,

- "version_minor": 0

- },

- "text/plain": [

- " 0%| | 0/5612 [00:00"

- ]

- },

- "metadata": {},

- "output_type": "display_data"

- }

- ],

+ "outputs": [],

"source": [

"classes = {f\"sample_{i}\": i for i in range(61)}\n",

"\n",

@@ -245,19 +154,11 @@

" axes[i].get_legend().remove()\n",

" axes[i].set_title(f\"{c}\")"

]

- },

- {

- "cell_type": "code",

- "execution_count": null,

- "id": "0549d7fe-31fb-4705-9be9-2b66bc3154ff",

- "metadata": {},

- "outputs": [],

- "source": []

}

],

"metadata": {

"kernelspec": {

- "display_name": "Python 3 (ipykernel)",

+ "display_name": "convolutional_ar",

"language": "python",

"name": "python3"

},

@@ -271,7 +172,7 @@

"name": "python",

"nbconvert_exporter": "python",

"pygments_lexer": "ipython3",

- "version": "3.11.5"

+ "version": "3.11.14"

}

},

"nbformat": 4,

diff --git a/texture_kth-tips2-b.ipynb b/texture_kth-tips2-b.ipynb

index cb3e6bb..574d149 100644

--- a/texture_kth-tips2-b.ipynb

+++ b/texture_kth-tips2-b.ipynb

@@ -22,7 +22,7 @@

"from sklearn.model_selection import train_test_split, StratifiedKFold\n",

"from sklearn.metrics import roc_auc_score\n",

"\n",

- "from convolutional_ar.model import ConvolutionalAR"

+ "from convolutional_ar.model import ConvAR"

]

},

{

@@ -49,31 +49,10 @@

},

{

"cell_type": "code",

- "execution_count": 3,

+ "execution_count": null,

"id": "d8a22e29-13bc-4ec0-a4f9-47b2bb3a2482",

"metadata": {},

- "outputs": [

- {

- "data": {

- "text/plain": [

- "{'aluminium_foil': 0,\n",

- " 'brown_bread': 1,\n",

- " 'corduroy': 2,\n",

- " 'cork': 3,\n",

- " 'cotton': 4,\n",

- " 'cracker': 5,\n",

- " 'lettuce_leaf': 6,\n",

- " 'linen': 7,\n",

- " 'white_bread': 8,\n",

- " 'wood': 9,\n",

- " 'wool': 10}"

- ]

- },

- "execution_count": 3,

- "metadata": {},

- "output_type": "execute_result"

- }

- ],

+ "outputs": [],

"source": [

"classes = [\n",

" 'aluminium_foil',\n",

@@ -95,25 +74,10 @@

},

{

"cell_type": "code",

- "execution_count": 4,

+ "execution_count": null,

"id": "6ab4c8e4-7fef-4e21-af6f-f36528ba939f",

"metadata": {},

- "outputs": [

- {

- "data": {

- "application/vnd.jupyter.widget-view+json": {

- "model_id": "2a1fc8759c334e5c9cfd39eaeeccc363",

- "version_major": 2,

- "version_minor": 0

- },

- "text/plain": [

- " 0%| | 0/11 [00:00"

- ]

- },

- "metadata": {},

- "output_type": "display_data"

- }

- ],

+ "outputs": [],

"source": [

"# Plot AUC\n",

"from sklearn.metrics import RocCurveDisplay\n",

@@ -271,7 +200,7 @@

],

"metadata": {

"kernelspec": {

- "display_name": "Python 3 (ipykernel)",

+ "display_name": "convolutional_ar",

"language": "python",

"name": "python3"

},

@@ -285,7 +214,7 @@

"name": "python",

"nbconvert_exporter": "python",

"pygments_lexer": "ipython3",

- "version": "3.11.5"

+ "version": "3.11.14"

}

},

"nbformat": 4,

diff --git a/texture_kylberg.ipynb b/texture_kylberg.ipynb

index 6834cc3..4de9691 100644

--- a/texture_kylberg.ipynb

+++ b/texture_kylberg.ipynb

@@ -22,7 +22,7 @@

"from sklearn.model_selection import train_test_split, StratifiedKFold\n",

"from sklearn.metrics import roc_auc_score\n",

"\n",

- "from convolutional_ar.model import ConvolutionalAR"

+ "from convolutional_ar.model import ConvAR"

]

},

{

@@ -46,7 +46,7 @@

},

{

"cell_type": "code",

- "execution_count": 2,

+ "execution_count": null,

"id": "031422b1-4c1e-44c9-ba6c-92a3d7b976e3",

"metadata": {},

"outputs": [],

@@ -92,81 +92,10 @@

},

{

"cell_type": "code",

- "execution_count": 3,

+ "execution_count": null,

"id": "f4e6c26a-7bb2-447c-be94-5329d0844fd1",

"metadata": {},

- "outputs": [

- {

- "data": {

- "application/vnd.jupyter.widget-view+json": {

- "model_id": "e3f9d87f31784d21ae1581e052673e80",

- "version_major": 2,

- "version_minor": 0

- },

- "text/plain": [

- " 0%| | 0/4480 [00:00"

- ]

- },

- "metadata": {},

- "output_type": "display_data"

- }

- ],

+ "outputs": [],

"source": [

"# Plot examples\n",

"from sklearn.metrics import RocCurveDisplay\n",

@@ -278,7 +187,7 @@

],

"metadata": {

"kernelspec": {

- "display_name": "Python 3 (ipykernel)",

+ "display_name": "convolutional_ar",

"language": "python",

"name": "python3"

},

@@ -292,7 +201,7 @@

"name": "python",

"nbconvert_exporter": "python",

"pygments_lexer": "ipython3",

- "version": "3.11.5"

+ "version": "3.11.14"

}

},

"nbformat": 4,

diff --git a/vectors_kth.npy b/vectors_kth.npy

new file mode 100644

index 0000000..ad47089

Binary files /dev/null and b/vectors_kth.npy differ